Docker For Mac Disk Performance

Vlc 3.0 6 download. Then i can send you one.I suppose you already have a dvd-rom and 24bit 96KHz capable soundcard.

You are not able to run the Docker on Mac OS like on Linux. So, I use Symfony 4 on Docker v18 on Mac OS Mojave. One option would be using But you will not get the same performance as you.

For about two years, I’ve wanted to use Docker for local development. Hypothetically, it offers all the benefits of virtualized development environments like Vagrant (stable, re-creatable, isolated, etc.) but requires fewer resources.

Working at a consultancy, sometimes I need to switch back and forth between multiple projects in a day. Spinning a full VM up and down can take a while. Alternatively, running two or more virtual machines at once can eat up all of my computer’s resources.

While I’d been interested in Docker for a while, I hadn’t had the time and energy to really dive into it. Then I went to DockerCon this April, which finally gave me enough momentum to figure out how to integrate it into my development workflow.

Sharing is Caring (About File IO Speed)

I suspected—correctly—that Docker would fall prey to some of the same shortcomings as Vagrant. Specifically, I am thinking of the speed of shared volumes. Generally speaking, sharing files between MacOS and a virtual OS on a hypervisor breaks down when too many reads or writes are required in a short amount of time. This may be due to running asset pipelines like those of Rails or Ember that generate tons of temporary files.

Most sharing systems (VirtualBox, NFS) do not support ignoring subdirectories. That wouldn’t be so bad, except that many framework tools do not let you configure the location of dependencies and temp directories. I’m looking at you, Ember.

What’s a Developer to Do?

Generally, this problem leads you to one of the following solutions:

- Doing the reads/writes on the host and pushing the finished output into the container/guest

- Using SSHFS to share files out of the container/guest back to the host

- Using rsync or similar utilities that have to run separately but allow for ignoring subdirectories

- Editing the code in the container via SSH plus Vim or Emacs

Why I Wasn’t Satisfied

Each of the solutions mentioned above has drawbacks:

- Building on the host: You risk drift between your host machine and the development environment, and you have to install all the tooling on your host for all versions used by your projects.

- SSHFS: Personally, I’ve found this very flaky, especially if your computer goes to sleep. Searching the shared files from the host can be slow, to say nothing of editor tooling with compiled languages like TypeScript or C#. Additionally, having the code “live” in something as ephemeral as a Docker container seems like a bad idea.

- rsync: I think it’s annoying to have to run another process. Plus, rsync is one way, so changes on the container will not be reflected on the host (e.g. output from Rails generators). Of course, there are other options in this space such as Unison, that are bi-directional.

- Editing in the container: I miss some of the features/layout of editors like VS Code or Atom. And you’ve got to find a way to get all your favorite Vim or Emacs configs into the container. This solution also suffers from the same drawbacks as SSHFS with regard to code living the container.

Of course, there’s one option for Docker that sidesteps this whole issue. You could develop in a windowed Linux environment (native or virtual) that functions as the Docker machine. File sharing between the Docker machine and containers is extremely fast. But if you want to stay in MacOS and use Docker, I have a few tips to make your life better.

Docker-sync and Upcoming Changes

Docker-sync is a very handy Ruby gem that makes it easy to use rsync or unison file sharing with Docker. Rsync and unison allow you to exclude subdirectories, so you can ignore ./tmp, ./node_modules, ./dist, and so on. This gem even takes file sharing a step farther, using Docker volumes in conjunction with rsync/unison for optimum performance.

For instance, in my Rails project’s Dockerfile, I ADD just enough files to run bundle install, then I mount in my source directory via docker-compose. It’s important to remember that this wipes whatever you ADDed during the build. So, for example, if you add a gem to your Gemfile and bundle install, you’ll eventually need to rebuild your base image.

Generally, you should just run docker-compose up --build to make sure your image doesn’t get too out of date. Also, if you are using rsync, you’ll need to docker cp the automatically updated Gemfile.lock back out of the container to the host.

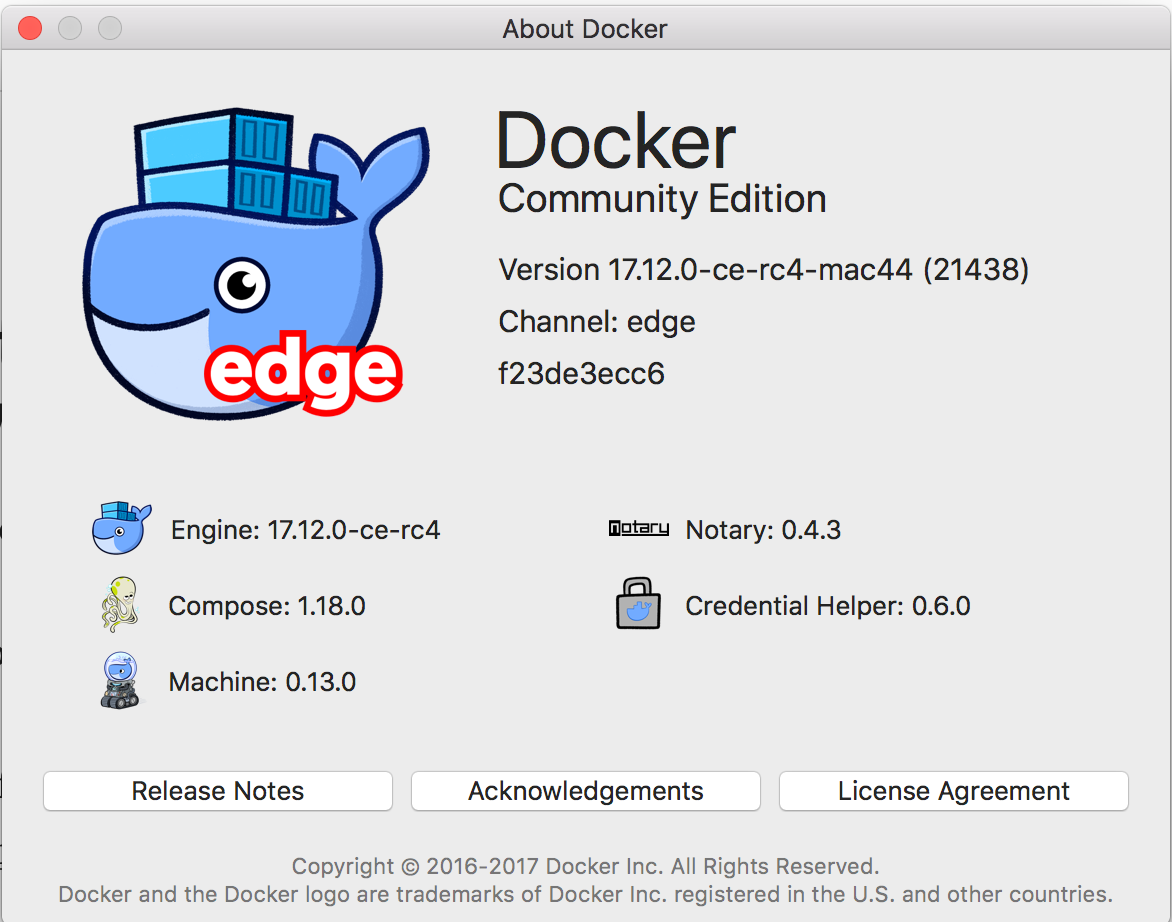

Docker is working on some improvements to Docker for Mac that may significantly improve the speed of reads and writes–provided you are okay with eventual consistency. The improvements for reading speed are coming soon, but last I checked, the improvements for writing speed (the more common problem in my experience) are still a ways out.

Slow IO±It’s More than Just Volume Mounting

Another disk IO problem you might run into using Docker for Mac is slow database speed. I noticed this when our Rails database migrations took around 10 times longer to run on Docker for Mac versus native.

After a bit of searching, I found this script on a GitHub issue. Running the script brought performance back to approximately the same as native. Hopefully, this issue will be fixed soon, and the script will be unnecessary.

I Still Want to Docker All the Things

Developing in Docker isn’t perfect, but the workflow is improving rapidly. So is the whole Docker ecosystem.

Multi-stage builds are going to streamline the process of creating development and production images. The Moby project will help developers pick and choose what parts of the tools they want to use.

I have faith that in a year or two, the file sharing/disk IO speed problems will be a distant memory. The container revolution is just getting started.

If you’re at all on the fence, I encourage you to try out Docker. For me, it wasn’t until I ported an existing project into Docker that I began to understand the big picture.

If you’ve moved your development environment to Docker, you might have noticed your web application stacks might be slower than another native environment you’ve been used to. There are things we can do to return your response times back down to how they were (or thereabouts).

Overview

1. Volume optimisations

Modify your volume bind-mount consistency. Consistency cache tuning in Docker follows a user-guided approach. We prefer delegated for most use-cases.

2. Use shared caches

Make sure that common resources are shared between projects — reduce unnecessary downloads, and compilation.

3. Increase system resources

Default RAM limit is 2GB, raise that up to 4GB — it won’t affect system performance. Consider increasing CPU limits.

4. Further considerations

A few final tips and tricks!

Introduction

Most of our web projects revolve around a common Linux, Nginx, MySQL, PHP (LEMP) stack. Historically, these components were installed on our machines using Homebrew, a virtual machine, or some other application like MAMP.

At Engage, all our developers use Docker for their local environments. We’ve also moved most of our pre-existing projects to a Dockerised setup too, meaning a developer can begin working on a project without having to install any prerequisites.

When we first started using Docker, it was incredibly slow in comparison to what we were used to; sharp, snappy response times similar to that of our production environments. The development quality of life wasn’t the best.

Why is it slower on Mac?

In Docker, we can bind-mount a volume on the host (your mac), to a Docker container. It gives the container a view of the host’s file system — In literal terms, pointing a particular directory in the container to a directory on your Mac. Any writes in either the host or container are then reflected vice-versa.

On Linux, keeping a consistent guaranteed view between the host and container has very little overhead. In contrast, there is a much bigger overhead on MacOS and other platforms in keeping the file system consistent — which leads to a performance degradation.

Docker containers run on top of a Linux kernel; meaning Docker on Linux can utilise the native kernel and the underlying virtual file system is shared between the host and container.

On Mac, we’re using Docker Desktop. This is a native MacOS application, which is bundled with an embedded hypervisor (HyperKit). HyperKit provides the kernel capabilities of Linux. However, unlike Docker on Linux, any file system changes need to be passed between the host and container via Docker for Mac, which can soon add a lot of additional computational overhead.

1. Volume optimisations

We’ve identified bind-mounts can be slow on Mac (see above).

One of the biggest performance optimisations you can make, is altering theguarantee that file system data is perfectly replicated to the host and container. Docker defaults to a consistent guarantee that the host and containers file system reflect each other.

For the majority of our use cases at Engage we don’t actually need a consistent reflection — perfect consistency between container and host is often unnecessary. We can allow for some slight delays, and temporary discrepancies in exchange for greatly increased performance.

The options Docker provides are:

Consistent | The host and container are perfectly consistent. Every time a write happens, the data is flushed to all participants of the mount’s view. |

Cached | The host is authoritative in this case. There may be delays before writes on a host are available to the container. |

Delegated | The container is authoritative. There may be delays until updates within the container appear on the host. |

The file system delays between the host and the container aren’t perceived by humans. However, certain workloads could require increased consistency. I personally default to delegated, as generally our bind-mounted volumes contain source code. Data is only changing when I hit save, and it’s already been replicated via delegated by the time I’ve got a chance to react.

Some other processes, such as our shared composer and yarn cache could benefit from Docker’s cached option — programs are persisting data, so in this case it might be more important that writes are perfectly replicated to the host.

See an example of a docker-compose.yml configuration below:

Docker doesn’t do this by default. It has a good reason, which states that a system that was not consistent by default would behave in ways that were unpredictable and surprising. Full, perfect consistency is sometimes essential.

Further reading:https://docs.docker.com/docker-for-mac/osxfs-caching/

2. Using shared caches

Most of our projects are using Composer for PHP, and Yarn for frontend builds. Every time we start a Docker container, it’s a fresh instance of itself. HTTP requests and downloading payloads over the web adds a lot of latency, and it brings the initial builds of projects to a snail’s pace — Composer and Yarn would have to re-download all it’s packages each time.

Another great optimisation is to bind-mount a ‘docker cache’ volume into the container, and use this across similar projects. Docker would then pull Composer packages from an internal cache instead of the web.

See an example of bind-mounting a docker cache into the container, we do this in the docker compose configuration:

3. Increasing system resources

If you’re using a Mac, chances are, you have a decent amount of RAM available to you. Docker uses 2GB of RAM by default. Quite a simple performance tweak would be to increase the RAM limit available to Docker. It won’t hurt anything to give Docker Desktop an extra 2GB of RAM, which will greatly improve those memory intensive operations.

You can also tweak the amount of CPUs available; particularly during times of increased i/o load, i.e running yarn install. Docker will be synchronising a lot of file system events, and actions between host and container. This is particularly CPU intensive. By default, Docker Desktop for Mac is set to use half the number of processors available on the host machine. Increasing this limit could be considered to alleviate I/O load.

4. Further considerations

This post isn’t exhaustive, as I’m sure there are other optimisations that can be made based on the context of each kind of setup. In our use cases though, we’ve found these tweaks can greatly improve performance.

Some final things to consider are:

- Ensure the Docker app is running the latest version of Docker for Mac.

- Ensure your primary drive, Macintosh HD, is formatted as APFS. this is Apple’s latest proprietary HDD format and comes with a few performance optimisations versus historical formats.

Final notes

Docker are always working on improving the performance of Docker for Mac, so it’s a good idea to keep your Docker app up to date in order to benefit from these performance optimisations. Most of the performance of file system I/O can be improved within Hypervisor/VM layers. Reducing the I/O latency requires shortening the data path from a Linux system call to MacOS and back again. Each component in the data path requires tuning, and in some cases, requires a significant amount of development effort from the Docker team.

Matthew primarily focuses on web application development, with a focus on high traffic environments. In addition, he is also responsible for a lot of our infrastructure that powers all of our various apps and systems.